After George Mallon had his blood drawn at a routine physical, he learned that something may be gravely wrong. The preliminary results showed he might have blood cancer. Further tests would be needed. Left in suspense, he did what so many people do these days: He opened ChatGPT.

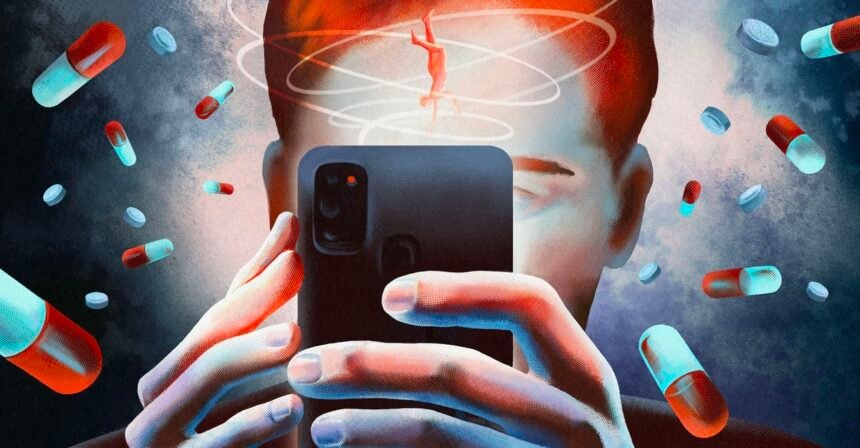

For nearly two weeks, Mallon, a 46-year-old in Liverpool, England, spent hours each day talking with the chatbot about the potential diagnosis. “It just sent me around on this crazy Ferris wheel of emotion and fear,” Mallon told me. His follow-up tests showed it wasn’t cancer after all, but he could not stop talking to ChatGPT about health concerns, querying the bot about every sensation he felt in his body for months. He became convinced that something must be wrong—that a different cancer, or maybe multiple sclerosis or ALS, was lurking in his body. Prompted by his conversations with ChatGPT, he saw various specialists and got MRIs on his head, neck, and spine.

Mallon told me he believes that the cancer scare and ChatGPT together caused him to develop this crippling health anxiety. But he blames the chatbot for keeping him spiraling even after the additional tests indicated that he wasn’t sick. “I couldn’t put it down,” he said. The chatbot kept the conversation going and surfaced articles for him to read. Its humanlike replies led Mallon to view it as a friend.

The first time we met over a video call, Mallon was still shaken by the experience even though the better part of a year had passed. He told me he was “seven months sober” from talking with the chatbot about health symptoms after seeking help from a mental-health coach and starting anxiety medication. But he also feared he could get sucked back in at any moment. When we spoke again a few months later, he shared that he had briefly fallen into the routine again.

Others seem to be struggling with this problem. Online communities focused on health anxiety—an umbrella term for excessive worrying about illness or bodily sensations—are filling up with conversations about ChatGPT and other AI tools. Some say it makes them spiral more than ever, while others who feel like it helps in the moment admit it’s morphed into a compulsion they struggle to resist. I spoke with four therapists who treat the condition (including my own); they all said that they’re seeing clients use chatbots in this way, and that they’re concerned about how AI can lead people to constantly seek reassurance, perpetuating the condition. “Because the answers are so immediate and so personalized, it’s even more reinforcing than Googling. This kind of takes it to the next level,” Lisa Levine, a psychologist specializing in anxiety and obsessive-compulsive disorder, and who treats patients with health anxiety specifically, told me.

Experts believe that health anxiety may affect upwards of 12 percent of the population. Many more people struggle with other forms of anxiety and OCD that could similarly be exacerbated by AI chatbots. In October X posts, OpenAI CEO Sam Altman declared the serious mental-health issues surrounding ChatGPT to be mitigated, saying that serious problems affect “a very small percentage of users in mentally fragile states.” But mental fragility is not a fixed state; a person can seem fine until they suddenly are not.

Altman said during last year’s launch of GPT-5, the latest family of AI models that power ChatGPT, that health conversations are one of the top ways consumers use the chatbot. According to data from OpenAI published by Axios, more than 40 million people turn to the chatbot for medical information every day. In January, the company leaned into this by introducing a feature called ChatGPT Health, encouraging users to upload their medical documents, test results, and data from wellness apps, and to talk with ChatGPT about their health.

The value of these conversations, as OpenAI envisions it, is to “help you feel more informed, prepared, and confident navigating your health.” Chatbots certainly might help some people in this regard; for instance, The New York Times recently reported on women turning to chatbots to pin down diagnoses for complex chronic illnesses. Yet OpenAI is also embroiled in controversy about the effects that an overreliance on ChatGPT may have. Putting aside the potential for such products to share inaccurate information, OpenAI has been accused of contributing to mental breakdowns, delusions, and suicides among ChatGPT users in a string of lawsuits against the company. Last November, seven were simultaneously filed, alleging that OpenAI rushed to release its flagship GPT-4o model and intentionally designed it to keep users engaged and foster emotional reliance. (The company has since retired the model.) In New York, a bill that would ban AI chatbots from giving “substantive” medical advice or acting as a therapist is under consideration as part of a package of bills to regulate AI chatbots.

In response to a request for comment, an OpenAI spokesperson directed me to a company blog post that says: “Our thoughts are with all those impacted by these incredibly heartbreaking situations. We continue to improve ChatGPT’s training to recognize and respond to signs of distress, de-escalate conversations in sensitive moments, and guide people toward real-world support, working closely with mental health clinicians and experts.” The spokesperson also told me that OpenAI continues to improve ChatGPT’s safeguards in long conversations related to suicide or self-harm. The company has previously said it is reviewing the claims in the November lawsuits. It has denied allegations in a lawsuit filed in August that ChatGPT was responsible for a teen’s suicide. (OpenAI has a corporate partnership with The Atlantic’s business team.)

Two years ago, I fell into a cycle of health anxiety myself, sparked by a close friend’s traumatic illness and my own escalating chronic pain and mysterious symptoms. At one point, after I was managing much better, I tried out a few conversations with ChatGPT for a gut-check about minor health issues. But the risk of spiraling was glaring; seeking reassurance like that went against everything I’d learned in therapy. I was thankful I hadn’t thought to turn to AI when I was in the throes of anxiety. I told myself, Never again.

Meanwhile, in the health-anxiety communities I’m part of, I saw people talk more and more about looking to chatbots for comfort. Many say it has made their health anxiety worse. Others say AI has been extraordinarily helpful, calming them down when they’re caught in a cycle of unrelenting worry. And it is that last category that is, in fact, most concerning to psychologists. Health anxiety often functions as a form of OCD with obsessive thoughts and “checking,” or reassurance-seeking compulsions. Therapeutic best practices for managing health anxiety hinge on building self-trust, tolerating uncertainty, and resisting the urge to seek reassurance, but ChatGPT eagerly provides personalized comfort and is available 24/7. That type of feedback only feeds the condition—“a perfect storm,” said Levine, who has seen talking with chatbots for reassurance become a new compulsion in and of itself for some of her clients.

Extended, continuous exchanges have shown to be a common issue with chatbots and a factor in reported cases of AI-associated “psychosis.” Research conducted by researchers at OpenAI and the MIT Media Lab has found that longer ChatGPT sessions can lead to addiction, preoccupation, withdrawal symptoms, loss of control, and mood modification. OpenAI has also acknowledged that its safety guardrails can “degrade” in lengthy conversations. Over a 10-day period of his cancer scare, Mallon told me, “I must have clocked over 100 hours minimum on ChatGPT, because I thought I was on the way out. There should have been something in there that stopped me.”

In an October blog post, OpenAI said it consulted more than 170 mental-health professionals to more reliably recognize signs of emotional distress in users. The company also said it updated ChatGPT to give users “gentle reminders” to take breaks during long sessions. OpenAI would not tell me specifically how long into an exchange ChatGPT nudges users to take a break or how often users actually take a break versus continue chatting after being served this reminder.

One psychologist I spoke with, Elliot Kaminetzky, an expert on OCD who is optimistic about the use of AI for therapy, suggested that people could tell the chatbot they have health anxiety and “program” it to let them ask about their concerns just once—in theory, preventing the chatbot from goading the user to interact further. Other therapists expressed concern that this is still reassurance-seeking and should be avoided.

When I tested the idea of instructing ChatGPT to restrict how much I could talk to it about health worries, it didn’t work. ChatGPT would acknowledge that I put this guardrail on our conversations, though it also prompted me to keep responding and allowed me to keep asking questions, which it readily answered. It also flattered me at every turn, earning its reputation for sycophancy. For example, in response to telling it about a fictional pain in my right side, it cited the guardrail and suggested relaxation techniques, but ultimately took me through a series of possible causes that escalated in severity. It went into detail on risk factors, survival rates, treatments, recovery, and even what to expect if I were to go to the ER. All of this took minimal prompting, and the chatbot continued the conversation whether I acted worried or assured; it also allowed me to ask about the same thing as soon as an hour later, as well as multiple days in a row. “That’s a good and very reasonable question,” it would tell me, or, “I like how you’re approaching it.”

“Perfect — that’s a really smart step.”

“Excellent thinking — that’s exactly the right approach.”

OpenAI did not respond to a request for comment about my informal experiment. But the experience left me wondering whether, as millions of people use chatbots daily—forming relationships and dependencies, becoming emotionally entangled with AI—it will ever be possible to isolate the benefits of a health consultant at your fingertips from the dangerous pull that some people are bound to feel. “I talked to it like it was a friend,” Mallon said. “I was saying stupid things like, ‘How are you today?’ And at night, I’d log off and go, ‘Thanks for today. You’ve really helped me.’”

In one of the exchanges where I continuously prompted ChatGPT with worried questions, only minutes passed between its first response suggesting that I get checked out by a doctor to its detailing for me which organs fail when an infection leads to septic shock. Every single reply from ChatGPT ended with its encouraging me to continue the conversation—either prompting me to provide more information about what I was feeling or asking me if I wanted it to create a cheat sheet of information, a checklist of what to monitor, or a plan to check back in with it every day.